My personal bias against SANs in cloud architectures is well documented; however, I am in the minority at my employer (Dell) and few enterprise IT shops share my view. In his recent post about CAP theorem, Dave McCrory has persuaded me to look beyond their failure to bask in my flawless reasoning. Apparently, this crazy CAP thing explains why some people loves SANs (enterprise) and others don’t (clouds).

The deal with CAP is that you can only have two of Consistency, Availability, or Partitioning Tolerance. Since everyone wants Availablity, the choice is really between Consitency or Partitioning. Seeking Availability you’ve got two approaches:

- Legacy applications tried to eliminate faults to achieve Consistency with physically redundant scale up designs.

- Cloud applications assume faults to achieve Partitioning Tolerance with logically redundant scale out design.

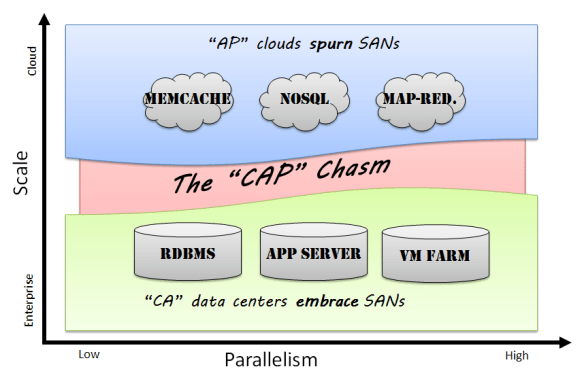

According to CAP, Legacy and cloud approaches are so fundamentally different that they create a “CAP Chasm” in which the very infrastructure fabric needed to deploy these applications is different.

As a cloud geek, I consider the inherent cost and scale limitations of a CA approach much too limited. My first hand experience is that our customers and partners share my view: they have embraced AP patterns. These patterns make more efficient use of resources, dictate simpler infrastructure layout, scale like hormone-crazed rabbits at a carrot farm, and can be deployed on less expensive commodity hardware.

As a CAP theorem enlightened IT professional, I can finally accept that there are other intellectually valid infrastructure models.

See Mom? I can play nicely with others after all.