I’ve been talking with a lot of OpenStack people about frustrating my attempted hybrid work on seven OpenStack clouds [OpenStack Session Wed 2:40]. This post documents the behavior Digital Rebar expects from the multiple clouds that we have integrated with so far. At RackN, we use this pattern for both cloud and physical automation.

Sunday, I found myself back in front of the the Board talking about the challenge that implementation variation creates for users. Ultimately, the question “does this harm users?” is answered by “no, they just leave for Amazon.”

I can’t stress this enough: it’s not about APIs! The challenge is twofold: implementation variance between OpenStack clouds and variance between OpenStack and AWS.

The obvious and simplest answer is that OpenStack implementers need to conform more closely to AWS patterns (once again, NOT the APIs).

Here are the eight Digital Rebar node allocation steps [and my notes about general availability on OpenStack clouds]:

- Add node specific SSH key [YES]

- Get Metadata on Networks, Flavors and Images [YES]

- Pick correct network, flavors and images [NO, each site is distinct]

- Request node [YES]

- Get node PUBLIC address for node [NO, most OpenStack clouds do not have external access by default]

- Login into system using node SSH key [PARTIAL, the account name varies]

- Add root account with Rebar SSH key(s) and remove password login [PARTIAL, does not work on some systems]

- Remove node specific SSH key [YES]

These steps work on every other cloud infrastructure that we’ve used. And they are achievable on OpenStack – DreamHost delivered this experience on their new DreamCompute infrastructure.

I think that this is very achievable for OpenStack, but we’re doing to have to drive conformance and figure out an alternative to the Floating IP (FIP) pattern (IPv6, port forwarding, or adding FIPs by default) would all work as part of the solution.

For Digital Rebar, the quick answer is to simply allocate a FIP for every node. We can easily make this a configuration option; however, it feels like a pattern fail to me. It’s certainly not a requirement from other clouds.

I hope this post provides specifics about delivering a more portable hybrid experience. What critical items do you want as part of your cloud ops process?

Here’s our write-up:

Here’s our write-up:

awareness, you can be more secure WITHOUT putting more work for developers.

awareness, you can be more secure WITHOUT putting more work for developers. 2016 is the year we break down the monoliths. We’ve spent a lot of time talking about monolithic applications and microservices; however, there’s an equally deep challenge in ops automation.

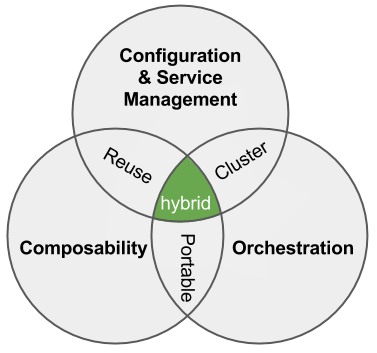

2016 is the year we break down the monoliths. We’ve spent a lot of time talking about monolithic applications and microservices; however, there’s an equally deep challenge in ops automation. So, what is open infrastructure? It’s not about running on open source software. It’s about creating platform choice and control. In my experience, that’s what defines open for users (and developers are not users).

So, what is open infrastructure? It’s not about running on open source software. It’s about creating platform choice and control. In my experience, that’s what defines open for users (and developers are not users).