Internally, my group (specifically Dave McCrory & Greg Althaus) has been kicking around some new ways of expressing clouds in an effort to help reconcile Dell’s traditional and cloud focused businesses. We’ve found it challenging to translate CAP theorem and

externalized application state into more enterprise-ready concepts.

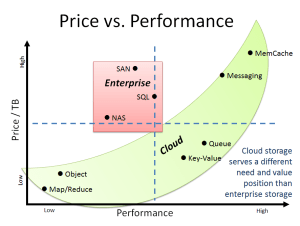

Our latest effort led to a pleasantly succinct explanation of why cloud storage is different than enterprise storage. Ultimately, it’s a matter of control and optimization. Cloud persistence (Cache, Queue, Tables, Objects) is functionally diverse in order to optimize for price and performance while enterprise storage (SAN, NAS, SQL) is control and centralization driven. Unfortunately for enterprises, the data genie is out of the Pandora’s box with respect to architectures that drive much lower cost and higher performance.

The background on this irresistible transformation begins with seeing storage as a spectrum of services as per the table below.

| Enterprise:

Consistent

|

Block (SAN) | iSCSI, Infiband:

Amazon EBS, EqualLogic, EMC Symmeterix |

| File (NAS) | NFS, CIFS:

NetApp, PowerVault, EMC Clariion |

|

| Database (ACID) | MS SQL, Oracle 11g, MySQL, Postgres | |

| Cloud:

Distributed Partitioned |

Object | DX/Caringo, OpenStack Swift, EMC Atmos |

| Map/Reduce | Hadoop DFS | |

| Key Value | Cassandra, CouchDB, Riak, Reddis, Mongo | |

| Queue (Bus) | RabbitMQ, ActiveMQ, ZeroMQ, OpenMQ, Celery | |

| Cloud:

Transitory

|

Messaging | AMPQ, MSMQ (.NET) |

| Shared RAM | MemCache, Tokyo Cabinet |

From this table, I approximated the relative price and performance for each component in the storage spectrum.

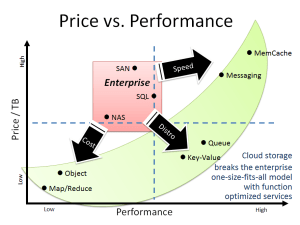

The result was the “cloud storage banana” graph. In this graph, enterprise storage is clustered in the “compromise” quadrant where there’s a high price for relatively low performance. The cloud persistence refuses to be clustered at all. To save cost and enable distributed data, applications will use cheap but slow object storage. This drives the need for high speed RAM based cache and distributed buses. These approaches are required when developers build fault tolerance at the application level.

Enterprises have enjoyed the false luxury of perceived hardware reliability. Where these assumptions are removed, applications are freed to scale more gracefully and consider resource cost in their consumption plans.

When we compare the enterprise Pandora’s box storage to the cloud persistence banana, a more general pattern emerges. The cloud persistence pattern represents a fragmentation of monolithic, IT controlled services into a more functional driven architecture. In this case, we see desire for speed, distribution and cost forcing change to application design patterns.

We also see similar dispersion patterns driving changes in compute and networking conventions.

So next time your corporate IT refuses to deploy Rabbit MQ or MemCacheD, just remember my mother’s sage advice for cloud architects: “time flies like an arrow, fruit flies like an banana.”