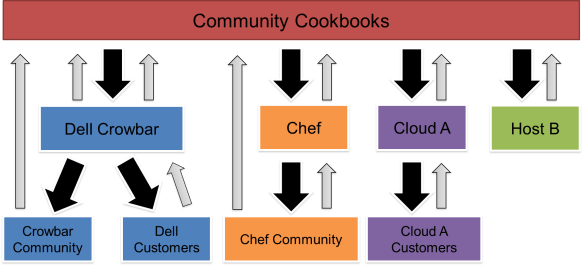

One of the major Crowbar 2.0 design targets is to allow you to “upstream” operations scripts more easily. “Upstream code” means that parts of Crowbar’s source code could be maintained in other open source repositories. This is beyond a simple dependency (like Rails, Curl, Java or Apache): Upstreaming allows Crowbar can use code managed in the other open source repositories for more general application. This is important because Crowbar users can leverage DevOps logic that is more broadly targeted than just Crowbar. Even more importantly, upstreaming means that we can contribute and take advantage of community efforts to improve the upstream source.

One of the major Crowbar 2.0 design targets is to allow you to “upstream” operations scripts more easily. “Upstream code” means that parts of Crowbar’s source code could be maintained in other open source repositories. This is beyond a simple dependency (like Rails, Curl, Java or Apache): Upstreaming allows Crowbar can use code managed in the other open source repositories for more general application. This is important because Crowbar users can leverage DevOps logic that is more broadly targeted than just Crowbar. Even more importantly, upstreaming means that we can contribute and take advantage of community efforts to improve the upstream source.

Specifically, Crowbar maintains a set of OpenStack cookbooks that make up the core of our OpenStack deployment. These scripts have been widely cloned (not forked) and deCrowbarized for other deployments. Unfortunately, that means that we do not benefit from downstream improvements and the cloners cannot easily track our updates. This happened because Crowbar was not considered a valid upstream OpenStack repository because our deployment scripts required Crowbar. The consequence of this cloning is that incompatible OpenStack recipes have propagated like cracks in a windshield.

While there are concrete benefits to upstreaming, there are risks too. We have to evaluate if the upstream code has been adequately tested, operates effectively, implements best practices and leverages Crowbar capabilities. I believe strongly that untested deployment code is worse than useless; consequently, the Dell Crowbar team provides significant value by validating that our deployments work as an integrated system. Even more importantly, we will not upstream from unmoderated sources where changes are accepted without regard for downstream impacts. There is a significant amount of trust required for upstreaming to work.

If upstreaming is so good, why did we not start out with upstream code? It was simply not an option at the time – Crowbar was the first (and is still!) most complete set of DevOps deployment scripts for OpenStack in a public repository.

We have reached a point with Crowbar development that we can correctly decouple Crowbar and Chef.

Hopefully you wrote “Cloudera 3.7” in pencil on your to-do list because the

Hopefully you wrote “Cloudera 3.7” in pencil on your to-do list because the  During last week’s

During last week’s  Last week,

Last week,

Curious about

Curious about  I’m overwhelmed and humbled by the enthusiasm

I’m overwhelmed and humbled by the enthusiasm  Don’t blink if you’ve been watching the

Don’t blink if you’ve been watching the