OpenStack got into exactly the place we expected: operations started with fragmented and divergent data centers (aka snowflaked) and OpenStack did nothing to change that. Can we fix that? Yes, but the answer involves relying on Amazon as our benchmark.

In advance of my OpenStack Summit Demo/Presentation (video!) [slides], I’ve spent the last few weeks mapping seven (and counting) OpenStack implementations into the cloud provider subsystem of the Digital Rebar provisioning platform. Before I started working on adding OpenStack integration, RackN already created a hybrid DevOps baseline. We are able to run the same Kubernetes and Docker Swarm provisioning extensions on multiple targets including Amazon, Google, Packet and directly on physical systems (aka metal).

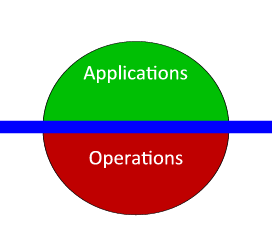

Before we talk about OpenStack challenges, it’s important to understand that data centers and clouds are messy, heterogeneous environments.

These variations are so significant and operationally challenging that they are the fundamental design driver for Digital Rebar. The platform uses a composable operational approach to isolate and then chain automation tasks together. That allows configurations, like networking, from infrastructure specific functions to be passed into common building blocks without user intervention.

Composability is critical because it allows operators to isolate variations into modular pieces and the expose common configuration elements. Since the pattern works successfully for crossing other clouds and metal, I anticipated success with OpenStack.

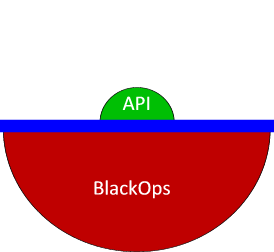

The challenge is that there is not “one standard OpenStack” implementation. This issue is well documented under OpenStack as Project Shade.

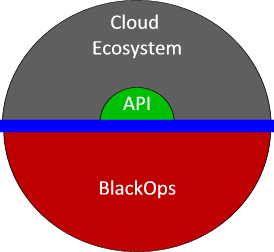

If you only plan to operate a mono-cloud then these are not concerns; however, everyone I’ve met is using at least AWS and one other cloud. This operational fact means that AWS provides the common service behavior baseline. This is not an API statement – it’s about being able to operate on the systems delivered by the API.

While the OpenStack API worked consistently on each tested cloud (win for DefCore!), it frequently delivered systems that could not be deployed or were unusable for later steps.

While these are not directly OpenStack API concerns, I do believe that additional metadata in the API could help expose material configuration choices. The challenge becomes defining those choices in a reference architecture way. The OpenStack principle of leaving implementation choices open makes it challenging to drive these options to a narrow set of choices. Unfortunately, it means it is difficult to create an intra-OpenStack hybrid automation without hard-coded vendor identities or exploding configuration flags.

As series of individually reasonable options dominoes together to make to these challenges. These are real issues that I made the integration difficult.

- No default of externally accessible systems. I have to assign floating IPs (an anti-pattern for individual VMs) or be on the internal networks. No consistent naming pattern for networks, types (flavors) or starting images. In several cases, the “private” network is the publicly accessible one and the “external” network is visible but unusable.

- No consistent naming for access user accounts. If I want to ssh to a system, I have to fail my first login before I learn the right user name.

- No data to determine which networks provide which functions. And there’s no metadata about which networks are public or private.

- Incomplete post-provisioning processes because they are left open to user customization.

There is a defensible and logical reason for each example above; sadly, those reasons do nothing to make OpenStack more operationally accessible. While intra-OpenStack interoperability is helpful, I believe that ecosystems and users benefit from Amazon-like behavior.

What should you do? Help broaden the OpenStack discussions to seek interoperability with the whole cloud ecosystem.

At RackN, we will continue to refine and adapt to these variations. Creating a consistent experience that copes with variability is the raison d’etre for our efforts with Digital Rebar. That means that we ultimately use AWS as the yardstick for configuration of any infrastructure from physical, OpenStack and even Amazon!